When importing files using XMLports, and especially when handling text files, file encoding is important. If the XMLport expects ASCII, and you feed it UTF-8, you may get scrambled data. If you have mismatching unicode input files, it may just fail altogether. Therefore, making sure that encoding is correct before you actually start gobbling input files might be important.

At least it was for me. I am currently automating data migration for a major go-live, and I am feeding some 30 input files to NAV, and I want to make sure they are all encoded correctly before I enter a process which would take another geological era to complete.

Detecting encoding is not something that pure C/AL can help you with, so I naturally went the .NET way. My position is that there is nothing a computer can do that .NET cannot. My another position is that there is no problem that I have that nobody before me ever had. Combining these two, we reach a yet another position of mine, that there is nothing that computer can do, of which there is no C# example, and typically I look for those on http://stackoverflow.com/

So, here’s the solution.

First article I found was this:

http://stackoverflow.com/questions/3825390/effective-way-to-find-any-files-encoding

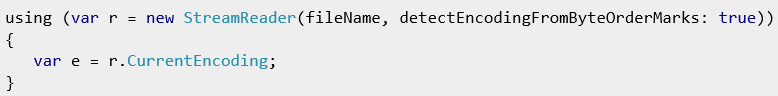

There is this piece of C# code there:

Technically, it works, but it has some limitations. Essentially, it always gives me UTF-8, even when files are ASCII-encoded. The StreamReader constructor reference on MSDN says this:

The detectEncodingFromByteOrderMarks parameter detects the encoding by looking at the first three bytes of the stream. It automatically recognizes UTF-8, little-endian Unicode, and big-endian Unicode text if the file starts with the appropriate byte order marks. Otherwise, the UTF8Encoding is used. See the Encoding.GetPreamble method for more information. (http://msdn.microsoft.com/en-us/library/9y86s1a9(v=vs.110).aspx)

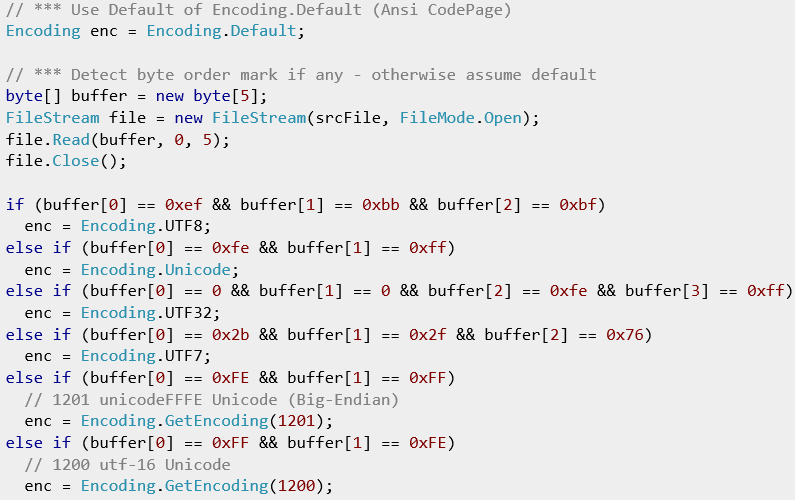

So, I took a look at Encoding.GetPreamble to find out what it has to say about the whole thing. It actually explains the byte order of the first several characters of the most common encodings, and it has a ready piece of C# code that explains how to use them. However, it only explains how to use five common unicode encodings, so I looked further and found some more ready-made C# functions that do the same.

For example, this:

http://stackoverflow.com/questions/4520184/how-to-detect-the-character-encoding-of-a-text-file

Okay, it has some minor bugs, but it provides the byte order for the UTF7 encoding.

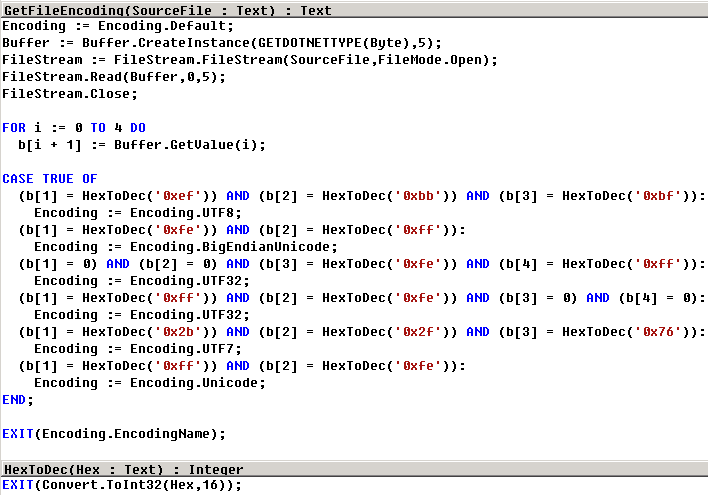

In the end, I translated this into C/AL, and included information from MSDN, to come up with this:

It helped me, and I hope it helps you. You can download the object here: File_Encoding_Management.zip.

I just read this; it’s not just too much work it’s explicitly the WRONG way to do it.

This is the right way; it’s similar but for ASCII like encodings you MUST look at the XML encoding declaration.

http://www.w3.org/TR/REC-xml/#sec-guessing

Robert: it is the wrong way if you have XML files. However, if you have text files, where do you have the XML encoding declaration there. If you read carefully, I talk about text files (quote: “especially when handling text files”), and for text file the only way to do it is the way I have done it.

Ah, you said XMLPorts and I still get confused that “XML”-ports are used for non-XML data …

Surely a better name would be something like … “dataports” ?

Well, I didn’t invent the name, did i? 🙂 I did blog about that, though (https://dynamicsblog.wpcomstaging.com/blog/natural-selection-death-of-dataports)

Pingback: Detect file encoding in C/AL using .NET Interop | Pardaan.com

Thank you very much Vjeko for this post, it solved the problem I stuck in! I implemented your code and changed only one small thing: added to the function GetFileEncoding a VAR parameter of DotNet type Encoding, because I call your function from other codeunit and needed this type for further processing the file. best regards!

Monica, you’re welcome and thanks for the comments!

Awesome! solves my problem in 10 minutes! Thanks a loooot!

Ohh that is super. This solved my problem very fast, too.

Big thank you.